What Is A/B Testing

On this page

We have leveraged two signature features - Relevance Tuning and Analytics - to create a new tool: A/B Testing.

Let’s see what this means:

- Relevance tuning lets you give your users the best search results.

- Analytics makes relevance tuning data-driven, ensuring that your configuration choices are sound and effective.

Relevance tuning, however, can be tricky. The choices are not always obvious. It is sometimes hard to know which settings to focus on and what values to set them to. It is also hard to know if what you’ve done is useful or not. What you need is input from your users, to test your changes live.

This is what A/B Testing does. It lets you: create two alternative search experiences with unique settings, put them both live, and see which one performs best.

Advantages of A/B Testing# A

With A/B Testing, you run alternative indices or searches in parallel, capturing Click and Conversion Analytics to compare effectiveness.

You make small incremental changes to your main index or search and have those changes tested - live and transparently by your customers - before making them official.

A/B Testing goes directly to an essential source of information - your users - by including them in the decision-making process, in the most reliable and least burdensome way.

These tests are widely-used in the industry to measure the usability and effectiveness of a website. Algolia’s focus is on measuring search and relevance: are your users getting the best search results? Is your search effective in engaging and retaining your customers? Is it leading to more clicks, more sales, more activity for your business?

Implementing A/B Testing# A

Algolia A/B Testing was designed with simplicity in mind. This user-friendliness enables you to perform tests regularly. Assuming you’ve set up Click and Conversion Analytics, A/B Testing doesn’t require any coding intervention. It can be managed from start to finish by people with no technical background.

Collect clicks and conversions#

To perform A/B testing, you need to set up your Click and Conversion Analytics: this is the only way of testing how each of your variants is performing.

While A/B testing itself does not require coding, sending clicks and conversions requires coding.

Set up the index or query#

We allow two kinds of A/B Tests:

- Test different index settings (applies to any parameter that can only be used in the

settingsscope). This requires creating replicas, which increases your record count. - Test different search parameters (no index setup required).

Run the A/B test#

After creating or selecting your indices, you can start your A/B tests in two steps.

- Use the A/B test tab of the Dashboard to create your test,

- Start the A/B test.

After letting your test run and collect analytics, you can review, interpret, and act on the results. You can then create new A/B tests, iteratively optimizing your search!

Accessing A/B testing through the Dashboard# A

To access A/B testing analytics and create A/B tests, you should go through the A/B testing tab of the Dashboard. The A/B testing section provides very basic analytics, so you may want to get more information through the Analytics tab. However, because all search requests to an A/B test first target the primary (‘A’) index, viewing a test directly on the Analytics tab will include searches to both the A and B variants.

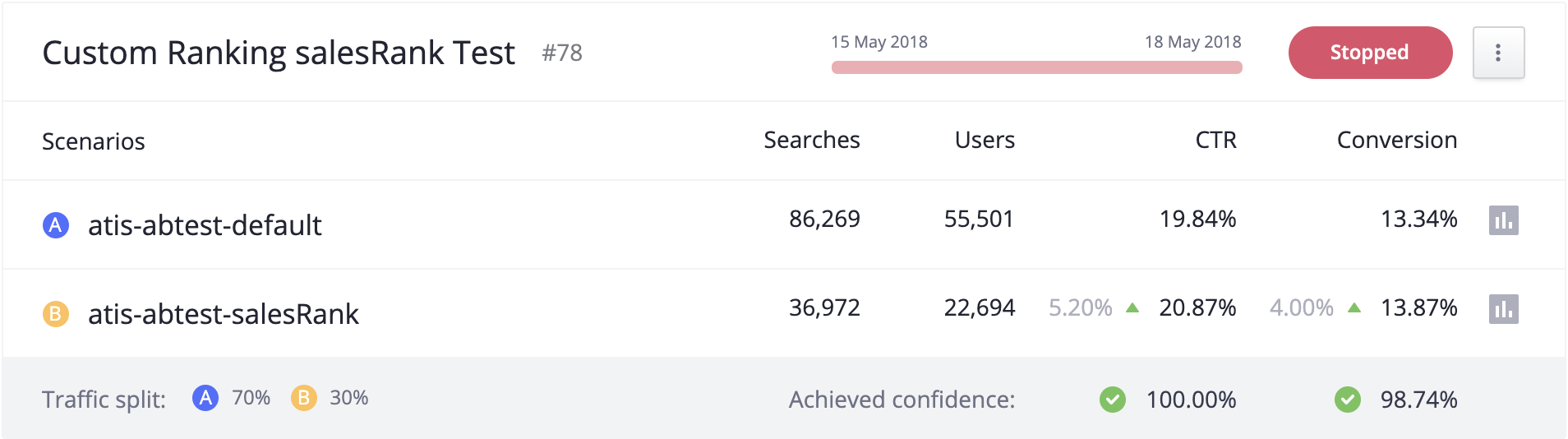

To let you view detailed analytics for each variant independently, we automatically add tags to A/B test indexes. To access the variant’s analytics, click on the small analytics icon on the right of each index description in the A/B test tab: it appears as a small bar graph in the figure above. The icon will automatically redirect you to the Analytics tab with the appropriate settings (time range and analyticsTags) applied.

The automatically added tags follow the structure alg#abtest:${abTestID}+variant:${variant}.

For example, creating A/B test “42” with two variants results in the two tags alg#abtest:42+variant:1 and alg#abtest:42+variant:2.

Example A/B tests# A

As previously mentioned, we allow two kinds of A/B Tests:

- comparing different index settings,

- comparing different search settings.

For index-based testing, you can test:

- your index settings,

- your data format.

For search-based settings, you can test any search-time setting, including:

- typo tolerance,

- Rule enablement,

- optional filters,

- etc.

Example 1, Changing your index settings#

Add a new custom ranking with the number_of_likes attribute#

You’ve recently offered your users the ability to like your items, which include music, films, and blog posts. You’ve gathered “likes” data, and you’d like to use this information to sort your search results.

Before you implement such a big change, if you want to make sure it improves your search, you can do this with A/B testing.

- Create your A/B test indices:

- Add a

number_of_likesattribute to your main catalog index (this is variant A in your A/B test), and then create variant B as a replica of variant A. - Adjust variant B’s settings by sorting it’s records based on

number_of_likes. - Name your test “Test new ranking with number_of_likes” in the A/B testing tab of the Dashboard.

- Set the test to run for 30 days. This is to ensure to get enough data and a good variety of searches. Set the date parameters accordingly.

- Set B at only 10% usage, because of the uncertainty of introducing a new sorting: you don’t want to change the user experience for too many users until you’re absolutely sure the change is desirable.

- When your test reaches 95% confidence or greater, see whether your change improves your search, and whether the improvement is large enough to justify implementation costs.

Example 2, Reformatting your data#

Add a new search attribute: short_description#

Your company has added a new short description to each of your records.

To see if adding the short description as a searchable attribute improves your relevance, here’s what you need to do:

- Configure your test index (you only need one index for this test).

- Add a new searchable attribute

short_descriptionto your main catalog index. Because you want to test a search-time setting for your index, you can use your main index as both variants A and B in your test. - Create an A/B test with the name “Testing the new short description” in the A/B testing tab of the Dashboard.

- Apply the

restrictSearchableAttributesquery parameter in variant B. Include all searchable attributes that you want to test, exceptshort_description. - Set the test length to 7 days. This assumes you have enough traffic so that 7 days of testing allows to form a reasonable conclusion.

- Direct 30% traffic to variant B for the same reason as example 1—because of the uncertainty: you’d rather not risk degrading an already good search with an untested attribute.

- Once you reach 95% confidence, you can judge the improvement and the cost of implementation to see whether this change is beneficial.

Example 3, enabling/disabling Rules: compare a query with and without merchandising#

You can use A/B testing to check the effectiveness of your Rules. This example tests search with Rules enabled against search with Rules disabled.

Your company has just received the new iphone. You want this item to appear at the top of the list for all searches that contain “apple” or “iphone” or “mobile”.

To use an A/B test to see whether putting the new iPhone at the top of your results encourages traffic and sales, here’s what you need to do:

- Create Rules for your main catalog index (this is variant A in the test) that promotes your new iPhone record.

- Configure your test index (you only need one index for this test).

- Ensure that Rules are enabled for variant A.

- Create a test with the name “Testing newly released iPhone merchandising” in the A/B testing tab of the Dashboard.

- Create your A/B test with variant A as both variants.

- Add

enableRulesas the varying query parameter in one variant. - Set the test length to 7 days. This assumes you have enough traffic so that 7 days of testing allows to form a reasonable conclusion.

- For the same reason as example 1, you give variant B only 30% usage (70/30) - because of the uncertainty: you’d rather not risk degrading an already good search with an untested attribute.

- Once you reach 95% confidence, you can judge the improvement and the cost of implementation to see whether this change is beneficial.